Pixels in. World state out.

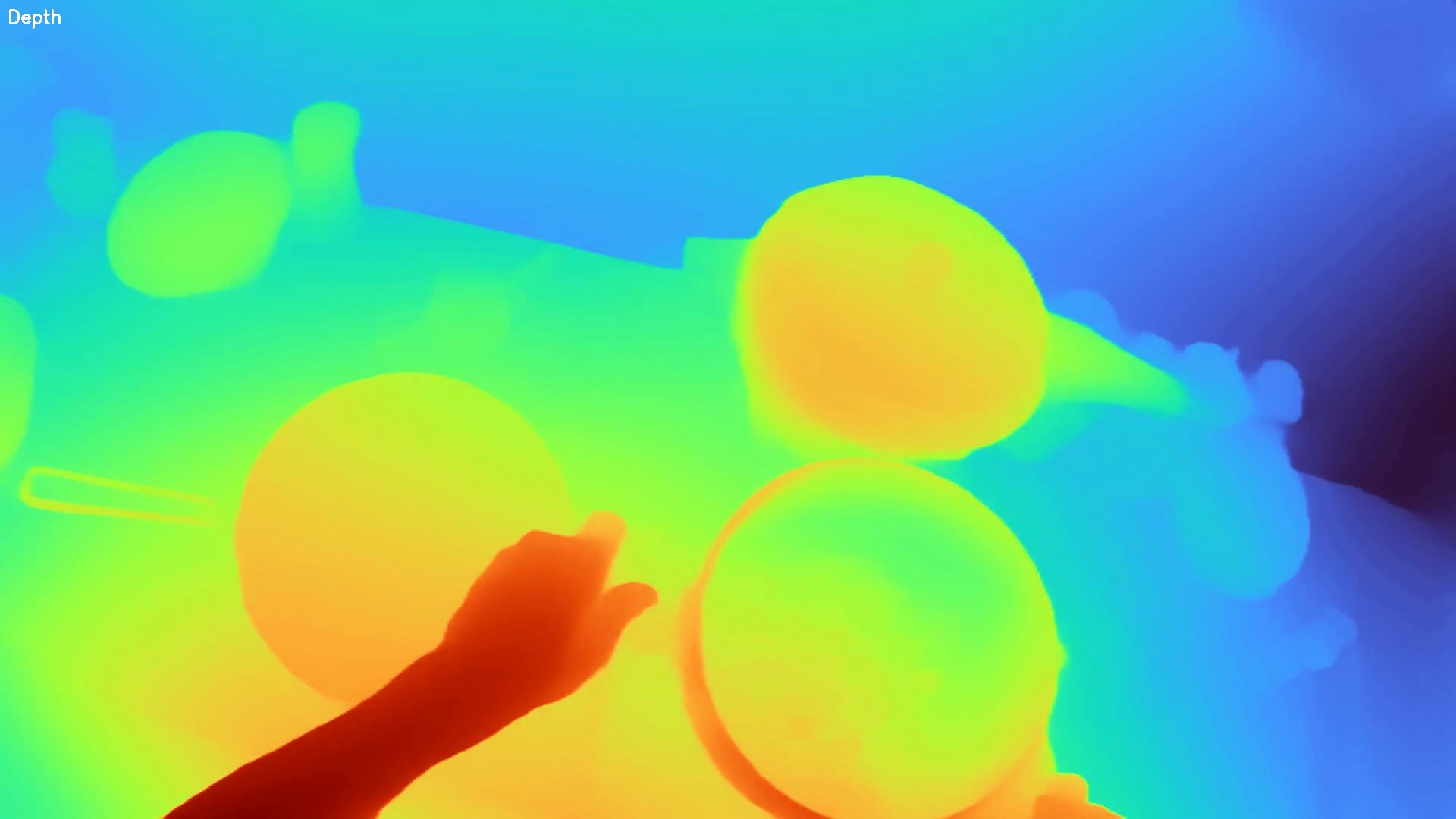

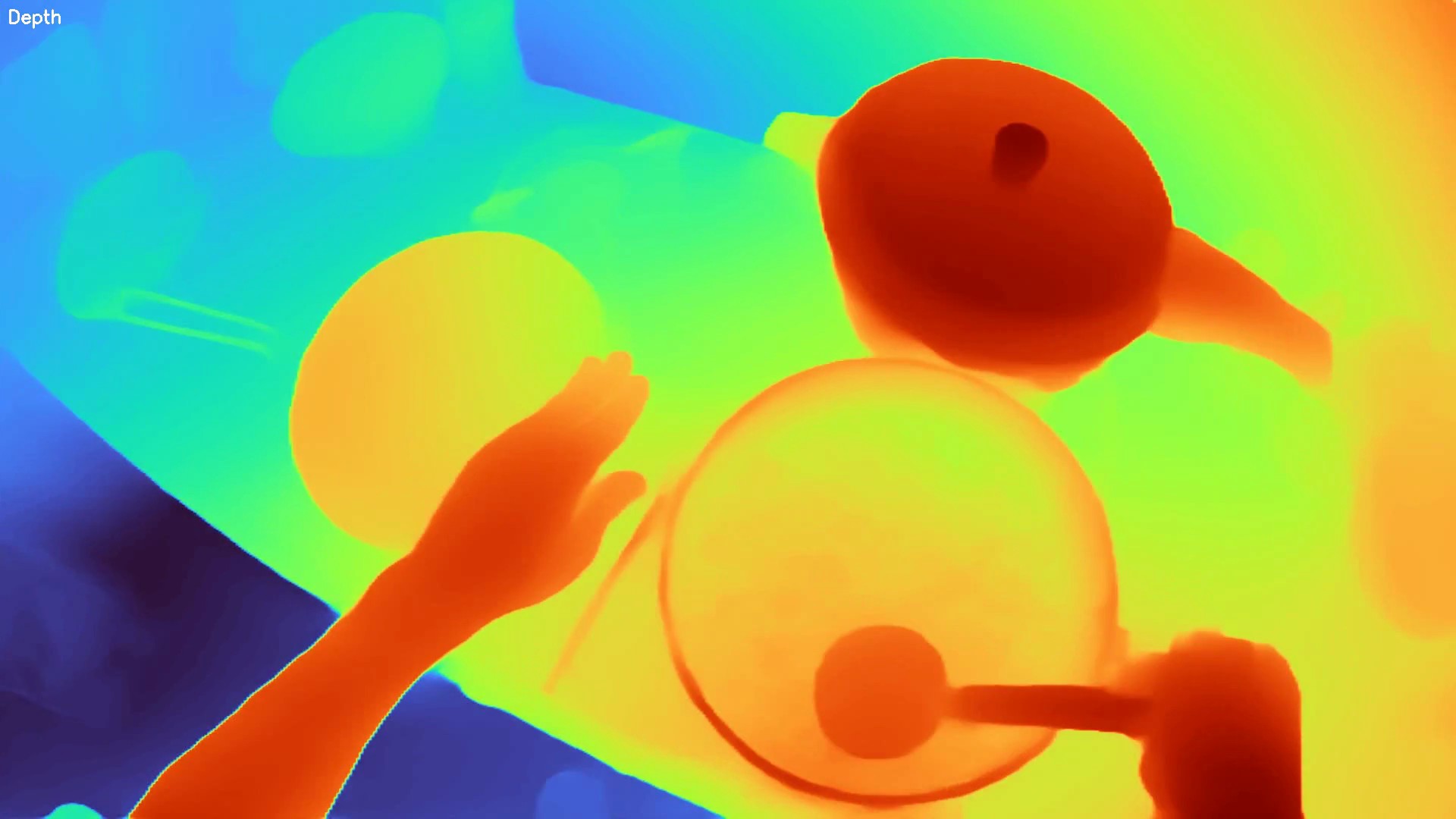

We capture egocentric and overhead video in real environments and lift every frame into structured world state — dense depth, panoptic segmentation, hand pose — in real time, on commodity hardware.

Focus Areas

Spatial Intelligence

Real-time 3D scene understanding, spatial reasoning, and world modeling for embodied agents.

Vision-Language Models

Multimodal architectures connecting visual perception with language understanding and generation.

Embodied AI

Training agents that perceive and act in the physical world — from robotics to AR/VR systems.

World Index — Live Samples

Our stack lifts ordinary RGB video into structured world state — dense depth, panoptic segmentation, and hand pose — running in real time on commodity hardware. Below: raw outputs captured from egocentric and overhead viewpoints in unstructured everyday environments.

Latest Research

View all →Spatial Reasoning in Vision Transformers: A Survey and New Directions

We survey recent advances in spatial reasoning capabilities of vision transformers and propose a new benchmark for evaluating 3D scene understanding from 2D inputs.

Building a Real-Time World Index: From Pixels to Semantic Maps

How we approach the problem of building structured, queryable representations of physical environments from streaming video — our architecture and lessons learned.

Open-Vocabulary Object Detection on the Edge: Practical Tricks That Work

Notes from our experience deploying open-vocabulary detection models on edge devices — what works, what doesn't, and the trade-offs we've made.